多角度看Binder

学习Linux出于开源和对操作系统的好奇,学了一些源码知识和驱动编写知识,如今面对android,也应该好奇到究其源码的份上,而第一个需要攻克的,是binder。希望可以帮到更多的朋友。

转载请注明出处:http://blog.csdn.net/callon_h/article/details/52073268

引子

上一篇博客从内核驱动到android app讲述了android通过框架层访问到硬件的方法。

这一篇博客,承接上一篇,来讲述其访问硬件的第二种方法中涉及的binder的知识(其实不止是硬件服务,后面大家会看到)。

有人看过上一篇博客源码的可能问,从上篇给的源码中完全没有看到binder相关的东西,那么在此先贴一段代码,慢慢讲:

/*

* This file is auto-generated. DO NOT MODIFY.

* Original file: frameworks/base/core/java/android/os/ILedService.aidl

*/

package android.os;

/** {@hide} */

public interface ILedService extends android.os.IInterface

{

/** Local-side IPC implementation stub class. */

public static abstract class Stub extends android.os.Binder implements android.os.ILedService

{

......

}

public int LedOpen() throws android.os.RemoteException;

public int LedOn(int arg) throws android.os.RemoteException;

public int LedOff(int arg) throws android.os.RemoteException;

}那么其中最重要的Stub类它继承(extends)于Binder并且还实现(implements)了android.os.ILedService里的接口。

这里就引出了我们的主角Binder,它是android源码分析中的重中之重。

Binder的概念

文章1-揭示本质:

简单地说,Binder是Android平台上的一种跨进程交互技术。

从实现的角度来说,Binder核心被实现成一个Linux驱动程序,并运行于内核态。这样它才能具有强大的跨进程访问能力。

文章2-溯其根源:

在Linux系统里面,进程之间是相互隔离的,也就是说进程之间的各个数据是互相独立,互不影响,而如果一个进程崩溃了,也不会影响到另一个进程。这样的前提下将互相不影响的系统功能分拆到不同的进程里面去,有助于提升系统的稳定性,毕竟我们都不想自己的应用进程崩溃会导致整个手机系统的崩溃。而Android是基于Linux系统进行开发的,也充分利用的进程隔离这一特性。

这些Android的系统进程,从System Server 到 SurfaceFlinger,各个进程各司其职,支撑起整个Android系统。而我们进行的Android开发也是和这些系统进程打交道,通过他们提供的服务,架构起我们的App程序。那么有了这些进程之后,问题紧接着而来,我们怎么和这些进程合作了?答案就是IPC。

Linux System 在IPC中,做了很多工作,提供了不少进程间通信的方式,下面罗列了几种比较常见的方式。

- Signals 信号量

- Pipes 管道

- Socket 套接字

- Message Queue 消息队列

- Shared Memory 共享内存

- 拮据的内存,移动设备上的内存情况不同于PC平台,内存受限,因而需要有合适的机制来保证对空闲进程的回收

- Android 不支持System V IPCs

- 安全性问题显得更为突出,移动平台特有的权限问题

- 需要Death Notification(进程终止的通知)的支持

由于前面提及的特殊性,先前的轮子已经不能满足所有的需求了,因而Android上就有了 Binder。 Binder 是一个基于OpenBinder开发,Google在其中进行了相应的改造和优化,在面向对象系统里面的IPC/组件,适配了相关特性,并致力于建立具有扩展性、稳定、灵活的系统。

但尽管如此,跨进程调用还是受到了 Linux 进程隔离的限制,而解决方案就是将其置于所有进程都能共享的区域 –Kernel,而 Binder Driver 提供的功能也就是让各进程使用内核空间,将进程中的地址和Kernel中的地址映射起来,其中Linux ioctl 函数实现了从用户空间转移到内核空间的功能。在 Binder Driver 的支持下,就能实现跨进程调用。

Binder的架构

文章3-述其本质

Binder架构包括服务器接口、Binder驱动、客户端接口三个模块。

Binder服务端(Server):一个Binder服务端实际上就是Binder类的对象,该对象一旦创建,内部则会启动一个隐藏线程,会接收Binder驱动发送的消息,收到消息后,会执行Binder对象中的onTransact()函数,并按照该函数的参数执行不同的服务器端代码。onTransact函数的参数是客户端调用transact函数的输入。

Binder驱动(Driver):任意一个服务端Binder对象被创建时,同时会在Binder驱动中创建一个mRemote对象,该对象也是一个Binder类。客户端访问远程服务端都是通过该mRemote对象。

客户端(Client):获取远程服务在Binder驱动中对应的mRemote引用,然后调用它的transact方法即可向服务端发送消息。

作为架构先了解到这,然后我们先来通过上一篇的Led的例子来理解架构。

细说Led中的Binder:

AIDL中的Binder架构:

首先附上详细的ILedService.java程序并加以说明:

/*

* This file is auto-generated. DO NOT MODIFY.

* Original file: frameworks/base/core/java/android/os/ILedService.aidl

*/

package android.os;

/** {@hide} */

public interface ILedService extends android.os.IInterface

{

/** Local-side IPC implementation stub class. */

public static abstract class Stub extends android.os.Binder implements android.os.ILedService

{

private static final java.lang.String DESCRIPTOR = "android.os.ILedService";

/** Construct the stub at attach it to the interface. */

public Stub()

{

this.attachInterface(this, DESCRIPTOR);

}

/**

* Cast an IBinder object into an android.os.ILedService interface,

* generating a proxy if needed.

*/

public static android.os.ILedService asInterface(android.os.IBinder obj)

{

if ((obj==null)) {

return null;

}

android.os.IInterface iin = obj.queryLocalInterface(DESCRIPTOR);

if (((iin!=null)&&(iin instanceof android.os.ILedService))) {

return ((android.os.ILedService)iin);

}

return new android.os.ILedService.Stub.Proxy(obj);

}

@Override public android.os.IBinder asBinder()

{

return this;

}

@Override public boolean onTransact(int code, android.os.Parcel data, android.os.Parcel reply, int flags) throws android.os.RemoteException

{

switch (code)

{

case INTERFACE_TRANSACTION:

{

reply.writeString(DESCRIPTOR);

return true;

}

case TRANSACTION_LedOpen:

{

data.enforceInterface(DESCRIPTOR);

int _result = this.LedOpen();

reply.writeNoException();

reply.writeInt(_result);

return true;

}

case TRANSACTION_LedOn:

{

data.enforceInterface(DESCRIPTOR);

int _arg0;

_arg0 = data.readInt();

int _result = this.LedOn(_arg0);

reply.writeNoException();

reply.writeInt(_result);

return true;

}

case TRANSACTION_LedOff:

{

data.enforceInterface(DESCRIPTOR);

int _arg0;

_arg0 = data.readInt();

int _result = this.LedOff(_arg0);

reply.writeNoException();

reply.writeInt(_result);

return true;

}

}

return super.onTransact(code, data, reply, flags);

}

private static class Proxy implements android.os.ILedService

{

private android.os.IBinder mRemote;

Proxy(android.os.IBinder remote)

{

mRemote = remote;

}

@Override public android.os.IBinder asBinder()

{

return mRemote;

}

public java.lang.String getInterfaceDescriptor()

{

return DESCRIPTOR;

}

@Override public int LedOpen() throws android.os.RemoteException

{

android.os.Parcel _data = android.os.Parcel.obtain();

android.os.Parcel _reply = android.os.Parcel.obtain();

int _result;

try {

_data.writeInterfaceToken(DESCRIPTOR);

mRemote.transact(Stub.TRANSACTION_LedOpen, _data, _reply, 0);

_reply.readException();

_result = _reply.readInt();

}

finally {

_reply.recycle();

_data.recycle();

}

return _result;

}

@Override public int LedOn(int arg) throws android.os.RemoteException

{

android.os.Parcel _data = android.os.Parcel.obtain();

android.os.Parcel _reply = android.os.Parcel.obtain();

int _result;

try {

_data.writeInterfaceToken(DESCRIPTOR);

_data.writeInt(arg);

mRemote.transact(Stub.TRANSACTION_LedOn, _data, _reply, 0);

_reply.readException();

_result = _reply.readInt();

}

finally {

_reply.recycle();

_data.recycle();

}

return _result;

}

@Override public int LedOff(int arg) throws android.os.RemoteException

{

android.os.Parcel _data = android.os.Parcel.obtain();

android.os.Parcel _reply = android.os.Parcel.obtain();

int _result;

try {

_data.writeInterfaceToken(DESCRIPTOR);

_data.writeInt(arg);

mRemote.transact(Stub.TRANSACTION_LedOff, _data, _reply, 0);

_reply.readException();

_result = _reply.readInt();

}

finally {

_reply.recycle();

_data.recycle();

}

return _result;

}

}

static final int TRANSACTION_LedOpen = (android.os.IBinder.FIRST_CALL_TRANSACTION + 0);

static final int TRANSACTION_LedOn = (android.os.IBinder.FIRST_CALL_TRANSACTION + 1);

static final int TRANSACTION_LedOff = (android.os.IBinder.FIRST_CALL_TRANSACTION + 2);

}

public int LedOpen() throws android.os.RemoteException;

public int LedOn(int arg) throws android.os.RemoteException;

public int LedOff(int arg) throws android.os.RemoteException;

}

而为什么我能说客户端需要的是Proxy而不是Stub?或者说为什么服务端需要的是Stub而不是Proxy?

原因1. 我们先把注意力集中到Proxy类中的

@Override public int LedOpen() throws android.os.RemoteException这个方法上(其实LedOn和LedOff也是一样的),它调用了一句:

mRemote.transact(Stub.TRANSACTION_LedOpen, _data, _reply, 0);@Override public boolean onTransact(int code, android.os.Parcel data, android.os.Parcel reply, int flags) throws android.os.RemoteException

{

switch (code)

{

case INTERFACE_TRANSACTION:

{

reply.writeString(DESCRIPTOR);

return true;

}

case TRANSACTION_LedOpen:

{

data.enforceInterface(DESCRIPTOR);

int _result = this.LedOpen();

reply.writeNoException();

reply.writeInt(_result);

return true;

}

case TRANSACTION_LedOn:

{

data.enforceInterface(DESCRIPTOR);

int _arg0;

_arg0 = data.readInt();

int _result = this.LedOn(_arg0);

reply.writeNoException();

reply.writeInt(_result);

return true;

}

case TRANSACTION_LedOff:

{

data.enforceInterface(DESCRIPTOR);

int _arg0;

_arg0 = data.readInt();

int _result = this.LedOff(_arg0);

reply.writeNoException();

reply.writeInt(_result);

return true;

}

}

return super.onTransact(code, data, reply, flags);

}public int LedOpen() throws android.os.RemoteException;原因2. 我们来看LedService这个类到底是继承自谁?

public class LedService extends ILedService.Stub原因3. Proxy意为代理人,Stub意为票根。

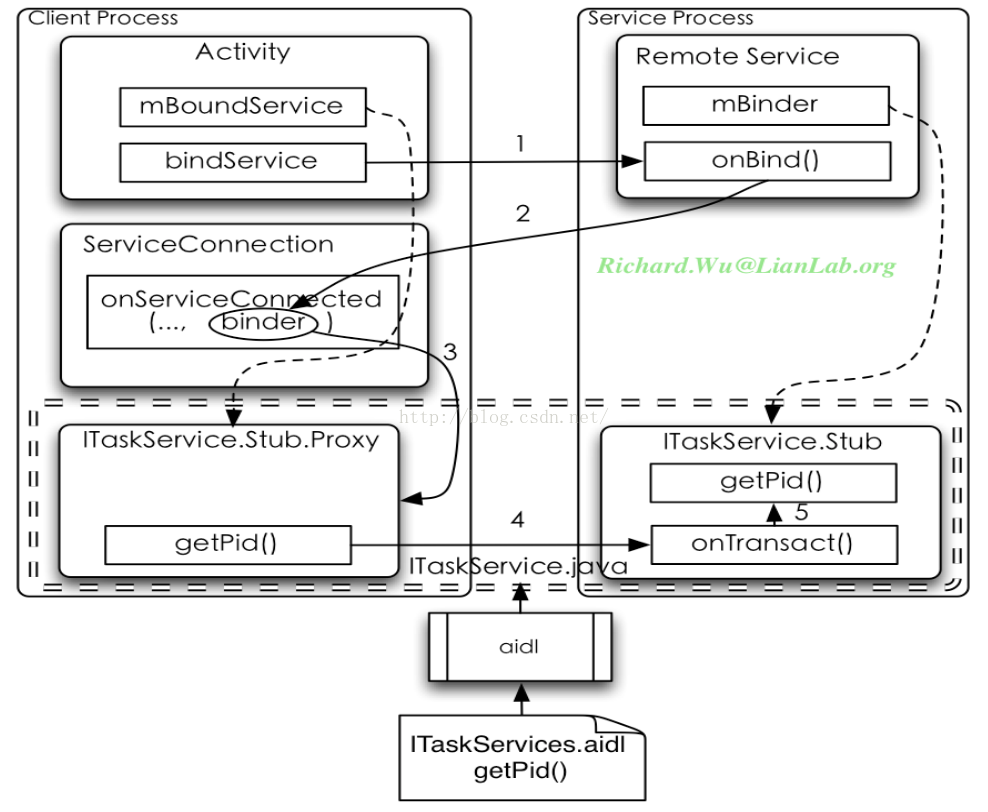

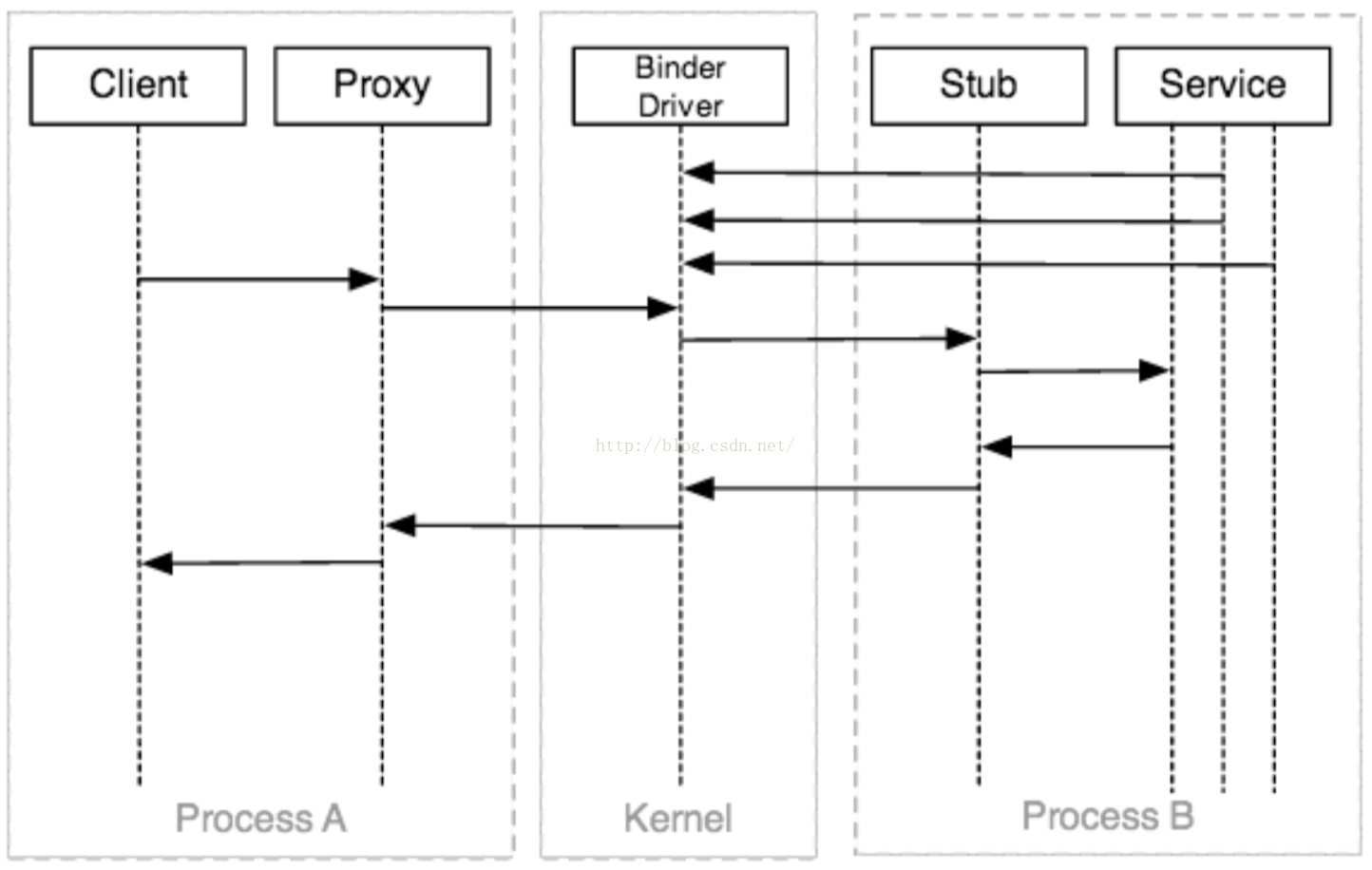

总结一下(1图取自文章4,2图取自文章5),个人觉得用图的方式总结是最好的:

该图中的Proxy为之前所述的ILedService.Stub.Proxy,Stub为ILedService.Stub,Client你可以认为是调用

iLedService = ILedService.Stub.asInterface(ServiceManager.getService("led"));

而上图是我们正常的service访问流程图,正常的service一般都含有IBinder mBinder对象和OnBind()方法,而在app的activity端有onServiceConnection()对应之,其中getPid()对应于我们的LedOpen()等AIDL定义的接口方法。这些都只是框架,如果要到代码层分析还需要了解一些Binder中的概念。

文章1—深入Binder概念

BpBinder——Binder中的代理方对应类,C++语言描述,继承自IBinder

BBinder——Binder中响应方对应类,C++语言描述,继承自IBinder

BpInterface——进程并不直接和BpBinder(Binder代理)打交道,而是通过调用BpInterface(接口代理)的成员函数来完成远程调用的

BnInterface——BnInterface是继承于BBinder的,它并没有采用聚合的方式来包含一个BBinder对象

ProcessState——在每个进程中,会有一个全局的ProcessState对象,ProcessState的字面意思就是“进程状态”,当然应该是每个进程一个ProcessState

Led代码层的Binder

我们通过前一篇博文可以看到,要完成LedService还需要的重要一步是注册进ServiceManager

led = new LedService();

ServiceManager.addService("led", led);由ServiceManager统一管理,而client进程与这些Service通信时,首先需要向ServiceManagerService中查找相应的Service,拿到返回值后再将返回值转成对应的接口,就可与对应的Service进行通信了,也就是我们在app中使用的语句

iLedService = ILedService.Stub.asInterface(ServiceManager.getService("led")); ServiceManagerService类似于DNS服务器,每一台pc都会向dns服务器中查询自己无法解析的域名对应的ip地址,然后使用拿到的ip地址进行访问。而client首先向ServiceManagerService查询自己需要的Service的handle(相当于ip),然后才跟对应的Service通信。而

ServiceManager.getService("led")

public final class ServiceManager {

private static final String TAG = "ServiceManager";

private static IServiceManager sServiceManager;

private static HashMap<String, IBinder> sCache = new HashMap<String, IBinder>();

private static IServiceManager getIServiceManager() {

if (sServiceManager != null) {

return sServiceManager;

}

// Find the service manager

sServiceManager = ServiceManagerNative.asInterface(BinderInternal.getContextObject());

return sServiceManager;

}

/**

* Returns a reference to a service with the given name.

*

* @param name the name of the service to get

* @return a reference to the service, or <code>null</code> if the service doesn't exist

*/

public static IBinder getService(String name) {

try {

IBinder service = sCache.get(name);

if (service != null) {

return service;

} else {

return getIServiceManager().getService(name);

}

} catch (RemoteException e) {

Log.e(TAG, "error in getService", e);

}

return null;

}

...

...

}再往下分析,首先看getIServiceManager()实际上调用了

// Find the service manager

sServiceManager = ServiceManagerNative.asInterface(BinderInternal.getContextObject());

public abstract class ServiceManagerNative extends Binder implements IServiceManager

{

/**

* Cast a Binder object into a service manager interface, generating

* a proxy if needed.

*/

static public IServiceManager asInterface(IBinder obj)

{

if (obj == null) {

return null;

}

IServiceManager in =

(IServiceManager)obj.queryLocalInterface(descriptor);

if (in != null) {

return in;

}

return new ServiceManagerProxy(obj);

}

...

}asInterface最后返回的是IServiceManager的子类ServiceManagerProxy,所以我们的getIServiceManager().getService(name)就相当于ServiceManagerProxy(obj).getService(name),其中obj就是我们的BinderInternal.getContextObject(),再往下

class ServiceManagerProxy implements IServiceManager {

public ServiceManagerProxy(IBinder remote) {

mRemote = remote;

}

public IBinder asBinder() {

return mRemote;

}

public IBinder getService(String name) throws RemoteException {

Parcel data = Parcel.obtain();

Parcel reply = Parcel.obtain();

data.writeInterfaceToken(IServiceManager.descriptor);

data.writeString(name);

mRemote.transact(GET_SERVICE_TRANSACTION, data, reply, 0);

IBinder binder = reply.readStrongBinder();

reply.recycle();

data.recycle();

return binder;

}

...

}

/**

* Return the global "context object" of the system. This is usually

* an implementation of IServiceManager, which you can use to find

* other services.

*/

public static final native IBinder getContextObject();

// ----------------------------------------------------------------------------

static const JNINativeMethod gBinderInternalMethods[] = {

/* name, signature, funcPtr */

{ "getContextObject", "()Landroid/os/IBinder;", (void*)android_os_BinderInternal_getContextObject },

{ "joinThreadPool", "()V", (void*)android_os_BinderInternal_joinThreadPool },

{ "disableBackgroundScheduling", "(Z)V", (void*)android_os_BinderInternal_disableBackgroundScheduling },

{ "handleGc", "()V", (void*)android_os_BinderInternal_handleGc }

};

static jobject android_os_BinderInternal_getContextObject(JNIEnv* env, jobject clazz)

{

sp<IBinder> b = ProcessState::self()->getContextObject(NULL);

return javaObjectForIBinder(env, b);

}

sp<IBinder> ProcessState::getContextObject(const sp<IBinder>& caller)

{

return getStrongProxyForHandle(0);

}

sp<IBinder> ProcessState::getStrongProxyForHandle(int32_t handle)

{

sp<IBinder> result;

AutoMutex _l(mLock);

handle_entry* e = lookupHandleLocked(handle);

if (e != NULL) {

// We need to create a new BpBinder if there isn't currently one, OR we

// are unable to acquire a weak reference on this current one. See comment

// in getWeakProxyForHandle() for more info about this.

IBinder* b = e->binder;

if (b == NULL || !e->refs->attemptIncWeak(this)) {

b = new BpBinder(handle);

e->binder = b;

if (b) e->refs = b->getWeakRefs();

result = b;

} else {

// This little bit of nastyness is to allow us to add a primary

// reference to the remote proxy when this team doesn't have one

// but another team is sending the handle to us.

result.force_set(b);

e->refs->decWeak(this);

}

}

return result;

}这里的参数为什么是0呢?之前我也提到了,0指的就是我们ServiceManager的binder对象,这个是唯一的,所以javaObjectForIBinder(env, b)也就是javaObjectForIBinder(env,BpBinder(0)),接着我们不再贴长代码分析,没有意义,得出结论比较重要:

1. 分析javaObjectForIBinder,它返回的是jobject javaObjectForIBinder(JNIEnv* env, const sp<IBinder>& val),一个jobject对象,并且这个jobject对象主要是从gBinderProxyOffsets强制转化而来的,并且env->SetIntField(object, gBinderProxyOffsets.mObject, (int)val.get());它把BpBinder对象放进了gBinderProxyOffsets.mObject

2. gBinderProxyOffsets在int_register_android_os_BinderProxy中我们可以找到答案

const char* const kBinderProxyPathName = "android/os/BinderProxy";

static int int_register_android_os_BinderProxy(JNIEnv* env)

{

...

gBinderProxyOffsets.mClass = (jclass) env->NewGlobalRef(clazz);

...

}

public IBinder getService(String name) throws RemoteException {

Parcel data = Parcel.obtain();

Parcel reply = Parcel.obtain();

data.writeInterfaceToken(IServiceManager.descriptor);

data.writeString(name);

mRemote.transact(GET_SERVICE_TRANSACTION, data, reply, 0);

IBinder binder = reply.readStrongBinder();

reply.recycle();

data.recycle();

return binder;

}

final class BinderProxy implements IBinder {

public native boolean pingBinder();

public native boolean isBinderAlive();

public IInterface queryLocalInterface(String descriptor) {

return null;

}

public boolean transact(int code, Parcel data, Parcel reply, int flags) throws RemoteException {

Binder.checkParcel(this, code, data, "Unreasonably large binder buffer");

return transactNative(code, data, reply, flags);

}

...

}

public native boolean transactNative(int code, Parcel data, Parcel reply,

int flags) throws RemoteException;

// ----------------------------------------------------------------------------

static const JNINativeMethod gBinderProxyMethods[] = {

/* name, signature, funcPtr */

{"pingBinder", "()Z", (void*)android_os_BinderProxy_pingBinder},

{"isBinderAlive", "()Z", (void*)android_os_BinderProxy_isBinderAlive},

{"getInterfaceDescriptor", "()Ljava/lang/String;", (void*)android_os_BinderProxy_getInterfaceDescriptor},

{"transactNative", "(ILandroid/os/Parcel;Landroid/os/Parcel;I)Z", (void*)android_os_BinderProxy_transact},

{"linkToDeath", "(Landroid/os/IBinder$DeathRecipient;I)V", (void*)android_os_BinderProxy_linkToDeath},

{"unlinkToDeath", "(Landroid/os/IBinder$DeathRecipient;I)Z", (void*)android_os_BinderProxy_unlinkToDeath},

{"destroy", "()V", (void*)android_os_BinderProxy_destroy},

};

static jboolean android_os_BinderProxy_transact(JNIEnv* env, jobject obj,

jint code, jobject dataObj, jobject replyObj, jint flags) // throws RemoteException

{

...

IBinder* target = (IBinder*)

env->GetLongField(obj, gBinderProxyOffsets.mObject);

...

status_t err = target->transact(code, *data, reply, flags);

...

return JNI_FALSE;

}

status_t BpBinder::transact(

uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags)

{

// Once a binder has died, it will never come back to life.

if (mAlive) {

status_t status = IPCThreadState::self()->transact(

mHandle, code, data, reply, flags);

if (status == DEAD_OBJECT) mAlive = 0;

return status;

}

return DEAD_OBJECT;

}

status_t IPCThreadState::waitForResponse(Parcel *reply, status_t *acquireResult)

{

int32_t cmd;

int32_t err;

while (1) {

if ((err=talkWithDriver()) < NO_ERROR) break;

...

cmd = mIn.readInt32();

...

switch (cmd) {

case BR_TRANSACTION_COMPLETE:

if (!reply && !acquireResult) goto finish;

break;

case BR_DEAD_REPLY:

err = DEAD_OBJECT;

goto finish;

case BR_FAILED_REPLY:

err = FAILED_TRANSACTION;

goto finish;

case BR_ACQUIRE_RESULT:

{

ALOG_ASSERT(acquireResult != NULL, "Unexpected brACQUIRE_RESULT");

const int32_t result = mIn.readInt32();

if (!acquireResult) continue;

*acquireResult = result ? NO_ERROR : INVALID_OPERATION;

}

goto finish;

case BR_REPLY:

...

goto finish;

default:

err = executeCommand(cmd);

if (err != NO_ERROR) goto finish;

break;

}

}

finish:

if (err != NO_ERROR) {

if (acquireResult) *acquireResult = err;

if (reply) reply->setError(err);

mLastError = err;

}

return err;

}

if (tr.target.ptr) {

sp<BBinder> b((BBinder*)tr.cookie);

const status_t error = b->transact(tr.code, buffer, &reply, tr.flags);

if (error < NO_ERROR) reply.setError(error);

} else {

const status_t error = the_context_object->transact(tr.code, buffer, &reply, tr.flags);

if (error < NO_ERROR) reply.setError(error);

}

status_t BBinder::transact(

uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags)

{

data.setDataPosition(0);

status_t err = NO_ERROR;

switch (code) {

case PING_TRANSACTION:

reply->writeInt32(pingBinder());

break;

default:

err = onTransact(code, data, reply, flags);

break;

}

if (reply != NULL) {

reply->setDataPosition(0);

}

return err;

}virtual status_t onTransact(

uint32_t code, const Parcel& data, Parcel* reply, uint32_t flags = 0)

{

JNIEnv* env = javavm_to_jnienv(mVM);

LOGV("onTransact() on %p calling object %p in env %p vm %p\n", this, mObject, env, mVM);

IPCThreadState* thread_state = IPCThreadState::self();

const int strict_policy_before = thread_state->getStrictModePolicy();

thread_state->setLastTransactionBinderFlags(flags);

jboolean res = env->CallBooleanMethod(mObject, gBinderOffsets.mExecTransact,

code, (int32_t)&data, (int32_t)reply, flags);

...

return res != JNI_FALSE ? NO_ERROR : UNKNOWN_TRANSACTION;

}很明显了,用gBinderOffsets.mExecTransact来执行了,而gBinderOffsets.mExecTransact是什么呢?

const char* const kBinderPathName = "android/os/Binder";

static int int_register_android_os_Binder(JNIEnv* env)

{

jclass clazz;

clazz = env->FindClass(kBinderPathName);

LOG_FATAL_IF(clazz == NULL, "Unable to find class android.os.Binder");

gBinderOffsets.mClass = (jclass) env->NewGlobalRef(clazz);

gBinderOffsets.mExecTransact

= env->GetMethodID(clazz, "execTransact", "(IIII)Z");

assert(gBinderOffsets.mExecTransact);

gBinderOffsets.mObject

= env->GetFieldID(clazz, "mObject", "I");

assert(gBinderOffsets.mObject);

return AndroidRuntime::registerNativeMethods(

env, kBinderPathName,

gBinderMethods, NELEM(gBinderMethods));

}

private boolean execTransact(int code, int dataObj, int replyObj,

int flags) {

Parcel data = Parcel.obtain(dataObj);

Parcel reply = Parcel.obtain(replyObj);

// theoretically, we should call transact, which will call onTransact,

// but all that does is rewind it, and we just got these from an IPC,

// so we'll just call it directly.

boolean res;

try {

res = onTransact(code, data, reply, flags);

} catch (RemoteException e) {

reply.writeException(e);

res = true;

} catch (RuntimeException e) {

reply.writeException(e);

res = true;

} catch (OutOfMemoryError e) {

RuntimeException re = new RuntimeException("Out of memory", e);

reply.writeException(re);

res = true;

}

reply.recycle();

data.recycle();

return res;

}

public boolean onTransact(int code, Parcel data, Parcel reply, int flags)

{

try {

switch (code) {

case IServiceManager.GET_SERVICE_TRANSACTION: {

data.enforceInterface(IServiceManager.descriptor);

String name = data.readString();

IBinder service = getService(name);

reply.writeStrongBinder(service);

return true;

}

...

...

}

} catch (RemoteException e) {

}

return false;

}virtual sp<IBinder> getService(const String16& name) const

{

unsigned n;

for (n = 0; n < 5; n++){

sp<IBinder> svc = checkService(name);

if (svc != NULL) return svc;

LOGI("Waiting for service %s...\n", String8(name).string());

sleep(1);

}

return NULL;

}

virtual sp<IBinder> checkService( const String16& name) const

{

Parcel data, reply;

data.writeInterfaceToken(IServiceManager::getInterfaceDescriptor());

data.writeString16(name);

remote()->transact(CHECK_SERVICE_TRANSACTION, data, &reply);

return reply.readStrongBinder();

}

switch(txn->code) {

case SVC_MGR_GET_SERVICE:

case SVC_MGR_CHECK_SERVICE:

s = bio_get_string16(msg, &len);

ptr = do_find_service(bs, s, len);

if (!ptr)

break;

bio_put_ref(reply, ptr);

return 0;

void *do_find_service(struct binder_state *bs, uint16_t *s, unsigned len)

{

struct svcinfo *si;

si = find_svc(s, len);

// ALOGI("check_service('%s') ptr = %p\n", str8(s), si ? si->ptr : 0);

if (si && si->ptr) {

return si->ptr;

} else {

return 0;

}

struct svcinfo *find_svc(uint16_t *s16, unsigned len)

{

struct svcinfo *si;

for (si = svclist; si; si = si->next) {

if ((len == si->len) &&

!memcmp(s16, si->name, len * sizeof(uint16_t))) {

return si;

}

}

return 0;

}至于我们怎样将service插入这个链表的,想大家也能猜到了,我们之前注册service的那几步中调用的ServiceManager.addService的底层就是插入链表操作了,多少都离不开我上面分析的这些过程,感兴趣的朋友可以自己创建SourceInsight工程分析一下~

参考文章:

1. 红茶一杯话Binder

3. 简单说Binder

4. Deep Dive into Android IPC/Binder Framework

6. Binder那点事儿

开放原子开发者工作坊旨在鼓励更多人参与开源活动,与志同道合的开发者们相互交流开发经验、分享开发心得、获取前沿技术趋势。工作坊有多种形式的开发者活动,如meetup、训练营等,主打技术交流,干货满满,真诚地邀请各位开发者共同参与!

更多推荐

已为社区贡献2条内容

已为社区贡献2条内容

所有评论(0)