Kaggle入门——使用scikit-learn解决DigitRecognition问题

Kaggle入门——使用scikit-learn解决DigitRecognition问题@author: wepon@blog: http://blog.csdn.net/u0121626131、scikit-learn简介scikit-learn是一个基于NumPy、SciPy、Matplotlib的开源机器学习工具包,采用Python语言编写,主要涵盖分类、回归和聚类等算法,例如knn、SVM

Kaggle入门——使用scikit-learn解决DigitRecognition问题

@author: wepon

@blog: http://blog.csdn.net/u012162613

1、scikit-learn简介

scikit-learn是一个基于NumPy、SciPy、Matplotlib的开源机器学习工具包,采用Python语言编写,主要涵盖分类、

回归和聚类等算法,例如knn、SVM、逻辑回归、朴素贝叶斯、随机森林、k-means等等诸多算法,官网上代码和文档

都非常不错,对于机器学习开发者来说,是一个使用方便而强大的工具,节省不少开发时间。

scikit-learn官网指南:http://scikit-learn.org/stable/user_guide.html

2、使用scikit-learn解决DigitRecognition

(1)处理数据

- def loadTrainData():

- #这个函数从train.csv文件中获取训练样本:trainData、trainLabel

- def loadTestData():

- #这个函数从test.csv文件中获取测试样本:testData

- def toInt(array):

- def nomalizing(array):

- #这两个函数在loadTrainData()和loadTestData()中被调用

- #toInt()将字符串数组转化为整数,nomalizing()归一化整数

- def loadTestResult():

- #这个函数加载测试样本的参考label,是为了后面的比较

- def saveResult(result,csvName):

- #这个函数将result保存为csv文件,以csvName命名

“处理数据”部分,我们从train.csv、test.csv文件中获取了训练样本的feature、训练样本的label、测试样本的feature,在程序中我们用trainData、trainLabel、testData表示。

(2)调用scikit-learn中的算法

- #调用scikit的knn算法包

- from sklearn.neighbors import KNeighborsClassifier

- def knnClassify(trainData,trainLabel,testData):

- knnClf=KNeighborsClassifier()#default:k = 5,defined by yourself:KNeighborsClassifier(n_neighbors=10)

- knnClf.fit(trainData,ravel(trainLabel))

- testLabel=knnClf.predict(testData)

- saveResult(testLabel,'sklearn_knn_Result.csv')

- return testLabel

kNN算法包可以自己设定参数k,默认k=5,上面的comments有说明。

更加详细的使用,推荐上官网查看:http://scikit-learn.org/stable/modules/neighbors.html

- #调用scikit的SVM算法包

- from sklearn import svm

- def svcClassify(trainData,trainLabel,testData):

- svcClf=svm.SVC(C=5.0) #default:C=1.0,kernel = 'rbf'. you can try kernel:‘linear’, ‘poly’, ‘rbf’, ‘sigmoid’, ‘precomputed’

- svcClf.fit(trainData,ravel(trainLabel))

- testLabel=svcClf.predict(testData)

- saveResult(testLabel,'sklearn_SVC_C=5.0_Result.csv')

- return testLabel

SVC()的参数有很多,核函数默认为'rbf'(径向基函数),C默认为1.0

更加详细的使用,推荐上官网查看:http://scikit-learn.org/stable/modules/svm.html

- #调用scikit的朴素贝叶斯算法包,GaussianNB和MultinomialNB

- from sklearn.naive_bayes import GaussianNB #nb for 高斯分布的数据

- def GaussianNBClassify(trainData,trainLabel,testData):

- nbClf=GaussianNB()

- nbClf.fit(trainData,ravel(trainLabel))

- testLabel=nbClf.predict(testData)

- saveResult(testLabel,'sklearn_GaussianNB_Result.csv')

- return testLabel

- from sklearn.naive_bayes import MultinomialNB #nb for 多项式分布的数据

- def MultinomialNBClassify(trainData,trainLabel,testData):

- nbClf=MultinomialNB(alpha=0.1) #default alpha=1.0,Setting alpha = 1 is called Laplace smoothing, while alpha < 1 is called Lidstone smoothing.

- nbClf.fit(trainData,ravel(trainLabel))

- testLabel=nbClf.predict(testData)

- saveResult(testLabel,'sklearn_MultinomialNB_alpha=0.1_Result.csv')

- return testLabel

上面我尝试了两种朴素贝叶斯算法:高斯分布的和多项式分布的。多项式分布的函数有参数alpha可以自设定。

- svcClf=svm.SVC(C=5.0)

- svcClf.fit(trainData,ravel(trainLabel))

fit(X,y)说明:

- testLabel=svcClf.predict(testData)

- saveResult(testLabel,'sklearn_SVC_C=5.0_Result.csv')

(3)make a submission

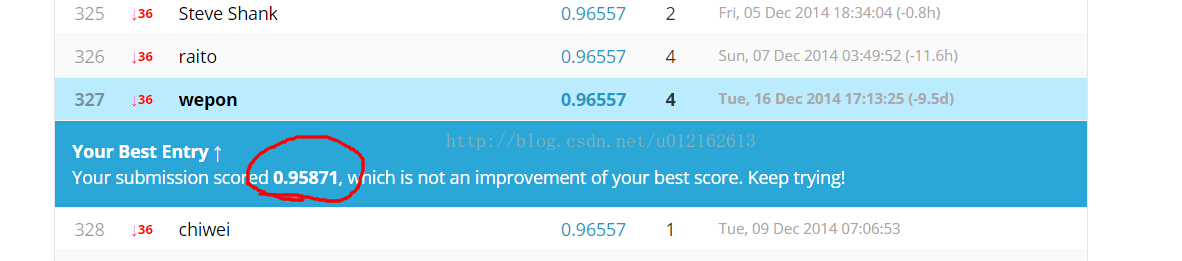

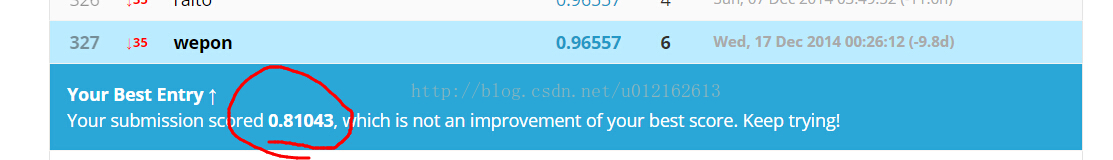

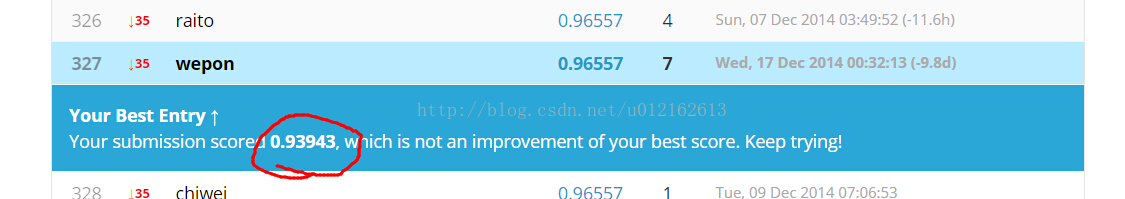

上面基本就是整个开发过程了,下面看一下各个算法的效果,在Kaggle上make a submission

3、工程文件

- #!/usr/bin/python

- # -*- coding: utf-8 -*-

- """

- Created on Tue Dec 16 21:59:00 2014

- @author: wepon

- @blog:http://blog.csdn.net/u012162613

- """

- from numpy import *

- import csv

- def toInt(array):

- array=mat(array)

- m,n=shape(array)

- newArray=zeros((m,n))

- for i in xrange(m):

- for j in xrange(n):

- newArray[i,j]=int(array[i,j])

- return newArray

- def nomalizing(array):

- m,n=shape(array)

- for i in xrange(m):

- for j in xrange(n):

- if array[i,j]!=0:

- array[i,j]=1

- return array

- def loadTrainData():

- l=[]

- with open('train.csv') as file:

- lines=csv.reader(file)

- for line in lines:

- l.append(line) #42001*785

- l.remove(l[0])

- l=array(l)

- label=l[:,0]

- data=l[:,1:]

- return nomalizing(toInt(data)),toInt(label) #label 1*42000 data 42000*784

- #return trainData,trainLabel

- def loadTestData():

- l=[]

- with open('test.csv') as file:

- lines=csv.reader(file)

- for line in lines:

- l.append(line)#28001*784

- l.remove(l[0])

- data=array(l)

- return nomalizing(toInt(data)) # data 28000*784

- #return testData

- def loadTestResult():

- l=[]

- with open('knn_benchmark.csv') as file:

- lines=csv.reader(file)

- for line in lines:

- l.append(line)#28001*2

- l.remove(l[0])

- label=array(l)

- return toInt(label[:,1]) # label 28000*1

- #result是结果列表

- #csvName是存放结果的csv文件名

- def saveResult(result,csvName):

- with open(csvName,'wb') as myFile:

- myWriter=csv.writer(myFile)

- for i in result:

- tmp=[]

- tmp.append(i)

- myWriter.writerow(tmp)

- #调用scikit的knn算法包

- from sklearn.neighbors import KNeighborsClassifier

- def knnClassify(trainData,trainLabel,testData):

- knnClf=KNeighborsClassifier()#default:k = 5,defined by yourself:KNeighborsClassifier(n_neighbors=10)

- knnClf.fit(trainData,ravel(trainLabel))

- testLabel=knnClf.predict(testData)

- saveResult(testLabel,'sklearn_knn_Result.csv')

- return testLabel

- #调用scikit的SVM算法包

- from sklearn import svm

- def svcClassify(trainData,trainLabel,testData):

- svcClf=svm.SVC(C=5.0) #default:C=1.0,kernel = 'rbf'. you can try kernel:‘linear’, ‘poly’, ‘rbf’, ‘sigmoid’, ‘precomputed’

- svcClf.fit(trainData,ravel(trainLabel))

- testLabel=svcClf.predict(testData)

- saveResult(testLabel,'sklearn_SVC_C=5.0_Result.csv')

- return testLabel

- #调用scikit的朴素贝叶斯算法包,GaussianNB和MultinomialNB

- from sklearn.naive_bayes import GaussianNB #nb for 高斯分布的数据

- def GaussianNBClassify(trainData,trainLabel,testData):

- nbClf=GaussianNB()

- nbClf.fit(trainData,ravel(trainLabel))

- testLabel=nbClf.predict(testData)

- saveResult(testLabel,'sklearn_GaussianNB_Result.csv')

- return testLabel

- from sklearn.naive_bayes import MultinomialNB #nb for 多项式分布的数据

- def MultinomialNBClassify(trainData,trainLabel,testData):

- nbClf=MultinomialNB(alpha=0.1) #default alpha=1.0,Setting alpha = 1 is called Laplace smoothing, while alpha < 1 is called Lidstone smoothing.

- nbClf.fit(trainData,ravel(trainLabel))

- testLabel=nbClf.predict(testData)

- saveResult(testLabel,'sklearn_MultinomialNB_alpha=0.1_Result.csv')

- return testLabel

- def digitRecognition():

- trainData,trainLabel=loadTrainData()

- testData=loadTestData()

- #使用不同算法

- result1=knnClassify(trainData,trainLabel,testData)

- result2=svcClassify(trainData,trainLabel,testData)

- result3=GaussianNBClassify(trainData,trainLabel,testData)

- result4=MultinomialNBClassify(trainData,trainLabel,testData)

- #将结果与跟给定的knn_benchmark对比,以result1为例

- resultGiven=loadTestResult()

- m,n=shape(testData)

- different=0 #result1中与benchmark不同的label个数,初始化为0

- for i in xrange(m):

- if result1[i]!=resultGiven[0,i]:

- different+=1

- print different

更多推荐

已为社区贡献3条内容

已为社区贡献3条内容

所有评论(0)